Recently I had a discussion with another forum user on VMWare Communities regarding different network load balancing methods. After this I decided that Physical NIC Load/Load Based Teaming (referred to as LBT) was not only the best method to use to meet my requirements but would probably be the right method for other administrators using vCenter and vNetwork Distributed Switches (vDS).

While it might take a bit more effort to initially set up a vDS it does simplify the configuration in larger environments and makes adding new hosts much easier.

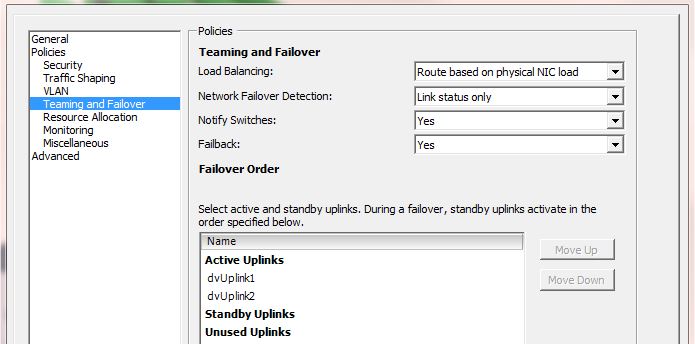

LBT balances network traffic from each VM based on the physical NIC load of each NIC in a vDS for a host (wow that’s a mouthful). See below for an example.

Scenario

- 1 Host with 2 NIC’s

- All NIC’s in the same vDS

- 5 Virtual Machines running on this host

- All 5 VM’s using the same Distributed Port Group in the above vDS

By default LBT will move a VM to a different NIC if the load on the current NIC goes over 75% for 30 seconds. This default value can be adjusted and current utilisation can be monitored using ‘esxtop’ from the ESX shell. If the utilisation of NIC1 does not go over the configured threshold, all 5 VM’s might run on the same NIC. As soon as the usage goes over 75% for 30 seconds, one (or more) VM/s will be moved to NIC2.

Positives

- Very scalable

- No additional configuration needed on the physical switch (EtherChannel etc is not required)

- Can balance each host across multiple switches for redundancy (without the need for a stack)

- RX and TX monitored individually

Negatives

- Each VM is limited to the speed of one Physical NIC. So if your host has 4 1Gbps interfaces, each VM will be able to only utilise 1 Gbps.

This isn’t as big of a problem as you might think. Even with all the other load balancing methods, traffic between two endpoints (eg. Server A <> Client A) will always be limited to the speed of one interface. But in a situation where you have one server that services multiple clients and requires more bandwidth than one interface can provide you should not use LBT.